It always amuses me when I hear people talk about liquid cooling as “the new frontier.” My first introduction to the industry was as a 16-year-old doing work experience in an East London data center. My primary task? Helping to rip out massive amounts of liquid cooling infrastructure.

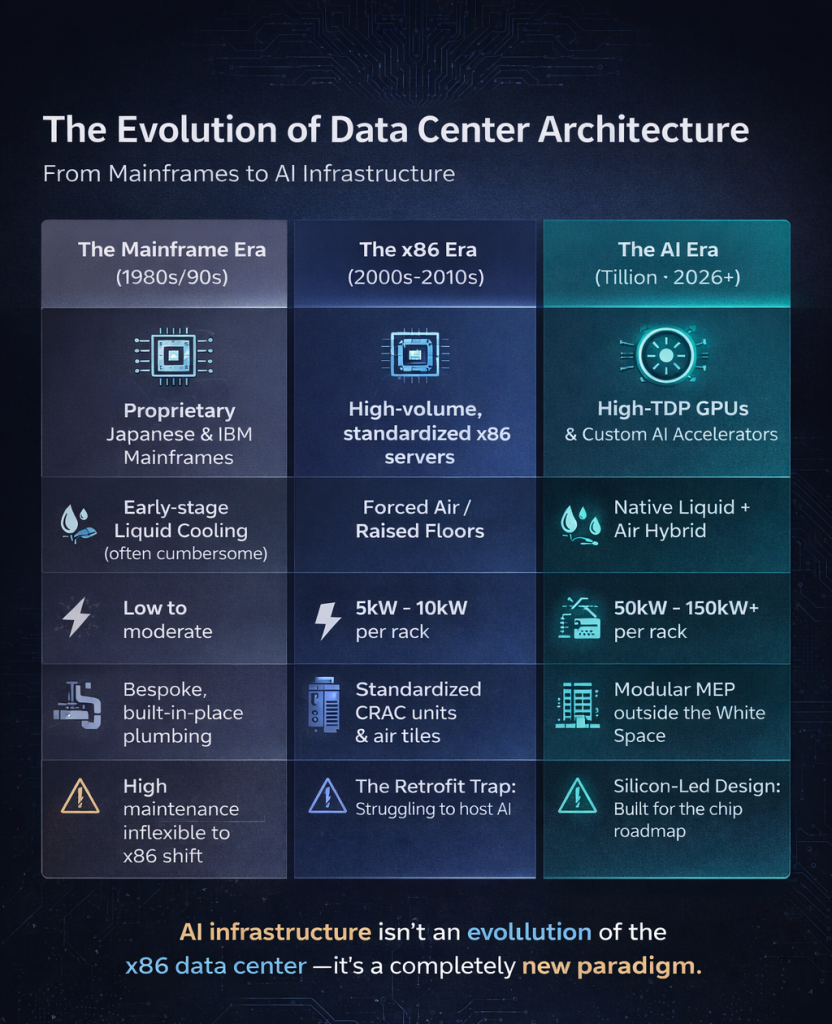

At the time, that infrastructure had been painstakingly installed to support the high-density cooling loads of Japanese banking mainframes. But then, the x86 revolution arrived. Suddenly, we had cheap, air-cooled hardware that changed the game overnight. That “advanced” liquid cooling became a liability—a physical obstruction to airflow that ate up valuable, sellable white space.

The Retrofit Trap

Fast forward twenty years, and the industry has come full circle. Those same facilities (and many built just a few years ago) are now scrambling to understand how to retrofit liquid cooling back into shells that were never designed for it.

I recently sat down for a panel discussion with Data Center Dynamics to discuss this very challenge. While the conversation was broad, five “hard truths” emerged about where the industry is heading:

Five Pillars of the New Infrastructure Reality

The “Sub-Optimal” Ceiling: You can retrofit a legacy facility to be liquid-ready, but you will likely never achieve an optimal outcome. The compromises in floor loading, pipe routing, and power density are simply too great.

The End of Decoupling: Cooling is no longer a general utility. It has become tightly coupled to the IT stack, mirroring the path that power distribution took a decade ago. If you don’t understand the chip, you can’t cool the room.

Keep the White Space “White”: We need to stop cluttering IT space with MEP (Mechanical, Electrical, and Plumbing) equipment like CDUs and rectifiers. History has shown us that a clean separation between facility infrastructure and IT hardware leads to better reliability and scalability.

The Rise of Cross-Industry Partnerships: The sheer scale of modern facilities is attracting experts from Nuclear, Oil & Gas, and Chemical Engineering. We are moving toward a modular future, where data centers are assembled from a small number of massive, pre-engineered mechanical and electrical modules.

Watch the Silicon, Not the App: Applications and customer demands change in a heartbeat, but silicon evolution moves at a more predictable, physical pace. If you want to know what a data center will look like in five years, look at the TDP (Thermal Design Power) of the chips currently on the fabrication roadmap.

The Tillion Advantage: Designing for the Roadmap

At Tillion, we aren’t guessing what the future looks like; we are looking at the blueprints of the silicon manufacturers. By maintaining a direct line of contact with the giants of chip fabrication, we design our facilities to be “future-proof” by default, not by retrofit.

Our Zaragoza 1 site reflects this philosophy:

Modular MEP: High-capacity cooling and power modules located outside the white space.

Hybrid Flexibility: A design that respects the legacy of air cooling while providing the massive thermal overhead required for the next generation of 1000W+ GPUs.

The Tillion Design Philosophy

- Clean White Space: We’ve re-consigned CDUs (Coolant Distribution Units) and rectifiers to the “gray space.” This keeps the IT floor dedicated to compute and simplifies the interface between the facility and the hardware.

- Modular Scalability: Drawing inspiration from the Nuclear and Chemical industries, we use large-scale mechanical modules. This allows us to scale the Zaragoza 1 site to 300MW without the “Frankenstein” patchwork common in expanded legacy sites.

- Direct-to-Chip Ready: Every rack position in our facility is plumbed and powered to handle the 2000W+ chips of the next decade, ensuring our partners never hit a “thermal ceiling.”

We don’t want to be the ones ripping out infrastructure in another twenty years. We are building the flexible, liquid-native backbone that the AI century demands.